With the deep integration of AI technology and the real economy, AI inference is rapidly shifting from centralized cloud data centers to device-level and edge computing. IDC predicts that by 2025, over 70% of enterprise data globally will be processed at the edge, rather than in traditional cloud data centers. Under this industry trend, data security and compliance, low-latency real-time response, and full-scenario deployment adaptability have become core requirements for enterprise AI implementation. The continued implementation of the Data Security Law of the People's Republic of China and the Personal Information Protection Law further solidifies the requirement that "data stays at the factory and processing is done locally" as a rigid requirement for AI deployment in various fields, including industrial manufacturing, government and enterprise services, and consumer terminals.

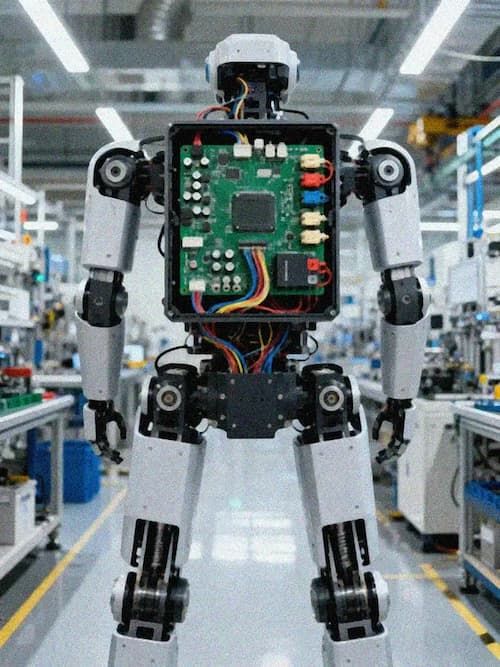

The RK3588 NPU Embedded Core Board, with its mature edge AI computing power, heterogeneous computing architecture, and industrial-grade stability, is becoming the core computing foundation for localized AI inference. It is driving a new paradigm shift in AI applications from "cloud-dependent" to "edge-autonomous," ensuring the security of the entire data loop while enabling efficient, stable, and low-cost AI inference deployment.

In the Era of Edge AI, Localized Inference Becomes an Industry Necessity

Traditional cloud-based AI inference models require uploading raw data collected from the terminal to a cloud data center for inference computation, and then sending the decision results back to the terminal for execution. This model has gradually exposed unavoidable industry pain points during the large-scale deployment of AI, making localized AI inference an inevitable choice for industry development.

Data security and compliance are the core bottom line for enterprise AI deployment. For industrial manufacturing enterprises, production process data, product quality inspection data, and equipment operation data are all core trade secrets. Uploading them to the cloud poses security risks of data leakage and theft. For scenarios involving facial recognition and behavioral analysis, cross-network data transmission further complicates compliance issues related to personal information protection. Localized AI inference enables closed-loop processing of the entire data process locally, eliminating the need for cross-network transmission and fundamentally solving data security and compliance challenges.

Real-time performance is a core requirement for industrial-grade AI applications. Scenarios such as industrial visual quality inspection, real-time equipment control, autonomous driving, and security emergency response all require millisecond-level end-to-end AI inference responses. Cloud processing is affected by network transmission latency and bandwidth fluctuations, failing to meet the real-time requirements of these scenarios. Only local edge-side inference can ensure the rapid issuance and execution of decision-making instructions, avoiding production losses and security risks caused by delays.

Optimizing bandwidth and operating costs is a core consideration for large-scale enterprise deployment. Industrial cameras, high-definition surveillance systems, environmental sensors, and other terminal devices can generate terabytes of raw data daily. Uploading all of this data to the cloud would incur extremely high bandwidth costs and cloud storage and computing power rental fees. Localized AI inference can complete data cleaning, feature extraction, and inference calculations on the edge, requiring only the transmission of key results, significantly reducing bandwidth consumption and long-term operating costs.

Meanwhile, the scenario-based upgrades of AI applications are also placing higher demands on edge computing power. Multimodal natural interaction requires the terminal to simultaneously process data from multiple sensors, including voice, vision, and gestures; complex scene understanding requires AI models to upgrade from single-target recognition to multi-task parallel processing that simultaneously performs target detection, semantic segmentation, key point detection, and behavior prediction; and the deployment characteristics of edge terminals further require computing platforms to provide continuous and stable AI computing power under limited power consumption and heat dissipation conditions of 5-8W, achieving a balance between high computing power and low power consumption.

As front-end AI is implemented, the industry still faces many common challenges. The 6 TOPS edge computing power is sufficient to cover current mainstream edge AI inference needs, but there is still room for optimization for large models with 10+ parameters deployed on the edge. The RK3588 platform covers multiple fields such as robotics, industry, healthcare, and smart homes. The fragmented nature of these scenarios leads to customized needs, placing extremely high demands on the completeness of the software toolchain and its underlying adaptation capabilities. The heterogeneous computing power collaboration of CPU+GPU+NPU requires developers to have deep underlying development capabilities, thus raising the application development threshold.

KINGBROTHER Edge AI Localized IPD Solution

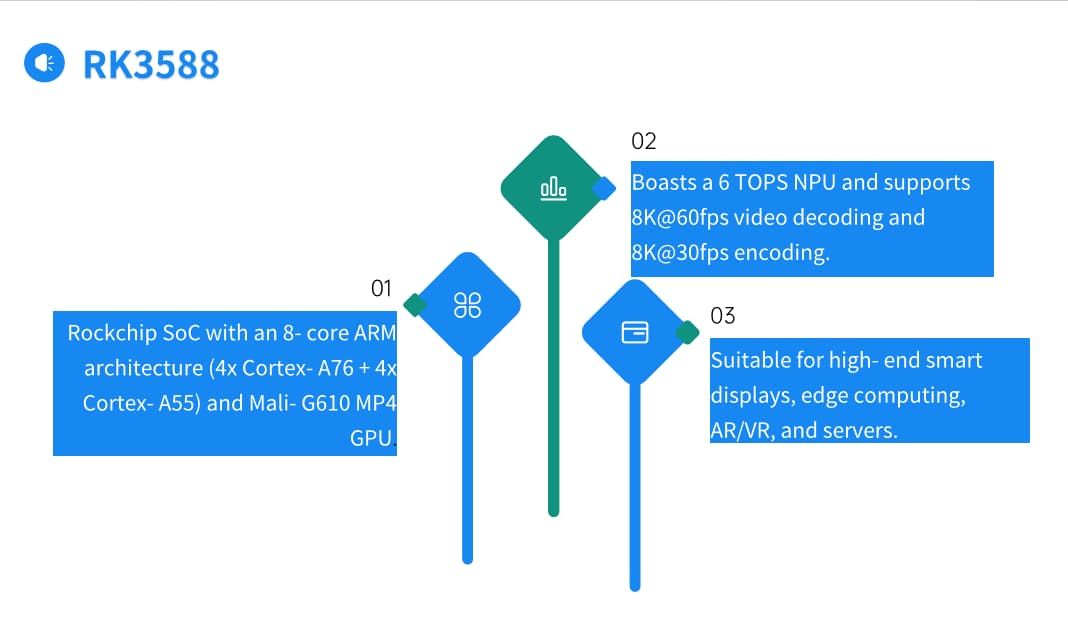

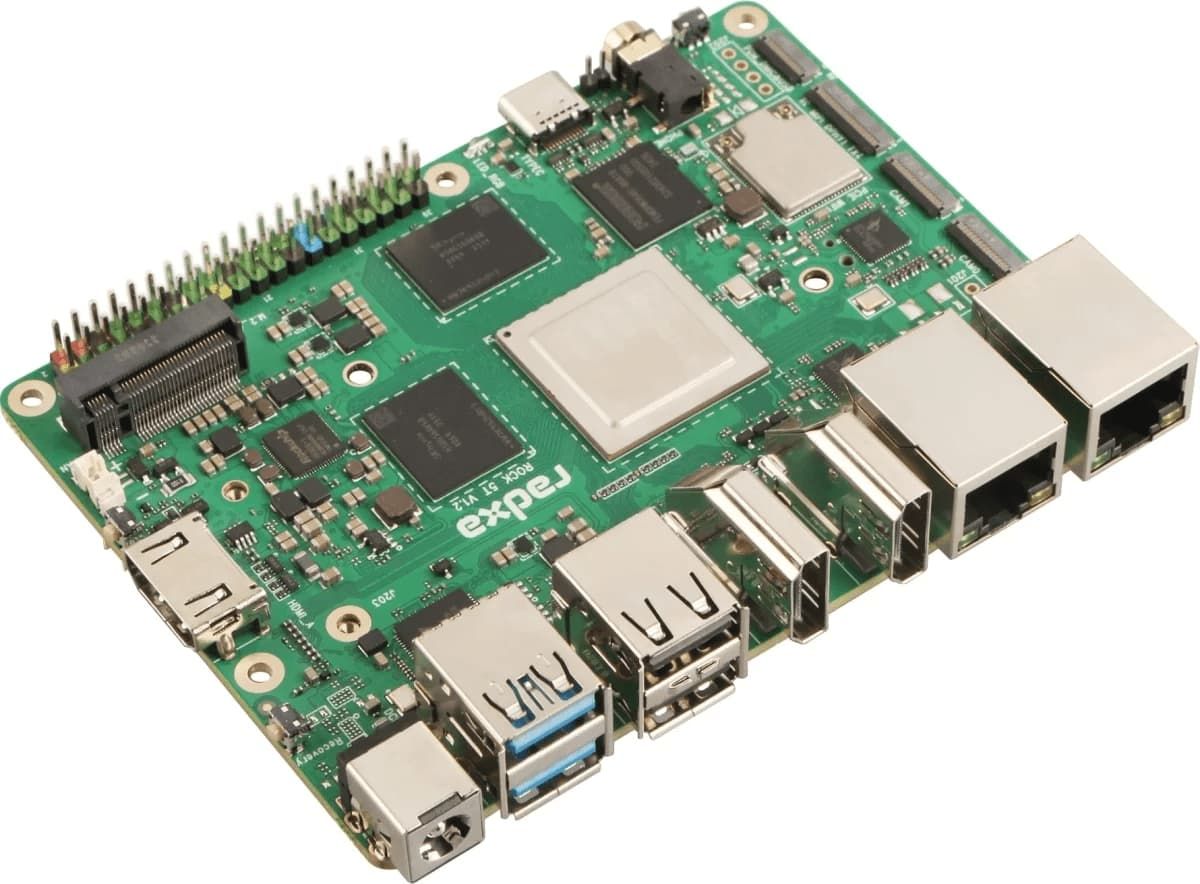

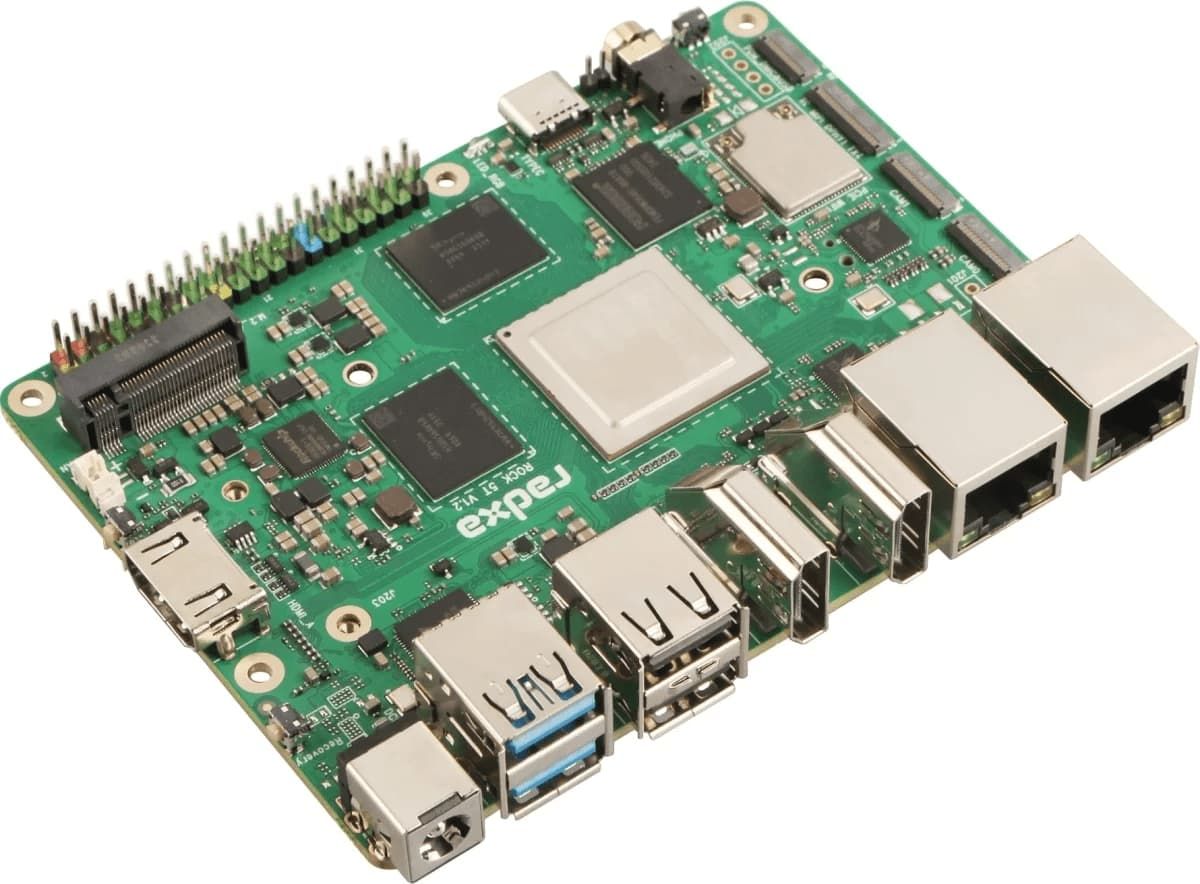

Based on Rockchip's flagship AIoT chip RK3588, the embedded core board is deeply optimized for localized AI inference scenarios at the edge. Its hardware architecture and capability matrix perfectly match the core needs of edge AI deployment, making it one of the most widely used domestic computing power platforms for edge AI scenarios in China.

In terms of hardware specifications, the RK3588 adopts an advanced 8nm process, featuring an 8-core 64-bit CPU (4 Cortex-A76 cores @ 2.4GHz + 4 Cortex-A55 cores), an integrated Mali-G610 MP4 high-performance GPU, and a dedicated NPU computing unit, providing 6 TOPS INT8 fixed-point AI computing power. The core board has undergone industrial-grade hardware optimization, achieving 99.8% stability under 720 hours of high-load operation. It also possesses wide-temperature operation, vibration resistance, and electromagnetic interference resistance, perfectly adapting to the long-term continuous operation requirements of various scenarios such as industrial sites, outdoor terminals, and embedded devices.

In the practical application of localized AI inference, the RK3588 embedded core board comprehensively addresses industry pain points from four core dimensions, providing solid support for edge AI applications.

End-to-End Local Closed-Loop: Strengthening Data Security and Compliance

The RK3588 embedded core board enables closed-loop processing of the entire data acquisition, preprocessing, AI inference, and decision execution process on the edge. All raw data is processed locally, without needing to be uploaded to cloud servers, truly achieving "data never leaves the factory, data never leaves the site." This feature fundamentally avoids the risks of leakage and tampering caused by cross-network data transmission, ensuring the security of core production processes and commercial data for industrial enterprises. It also meets the compliance requirements of the Data Security Law and the Personal Information Protection Law regarding the localization of personal information and important data, eliminating compliance risks for enterprise AI deployment.

Heterogeneous Computing Power Collaboration, Unleashing the Performance of Complex AI Inference on the Edge Side

The RK3588 core board implements heterogeneous computing power collaboration optimization of CPU+GPU+NPU, achieving optimal allocation of computing resources according to the characteristics of different computing tasks: the CPU is responsible for task scheduling, sensor data management, and terminal control logic; the GPU is responsible for multi-channel high-definition video stream encoding and decoding, image preprocessing, and parallel computing; and the NPU focuses on accelerating AI model inference, maximizing the potential of hardware computing power.

This heterogeneous architecture can efficiently support multimodal data fusion processing and parallel operation of multi-task AI models. It can simultaneously process multiple types of data such as vision, speech, and sensing, and can also simultaneously run complex inference tasks such as object detection, semantic segmentation, and key point detection. This drives the upgrade of AI applications from "identifying what" to "understanding what is doing," meeting the core needs of smart industry, intelligent terminals, and other scenarios for understanding complex scenarios.

High Energy Efficiency Design, Adaptable to All-Scenario Deployment in Edge Scenarios

Edge terminal deployment environments often lack data center-level power supply and heat dissipation conditions, placing stringent requirements on the power consumption control of computing platforms. The RK3588 utilizes an advanced 8nm process, providing a stable 6 TOPS of AI computing power while achieving excellent energy efficiency. It can run mainstream AI models for inference with a typical power consumption of 5-8W, eliminating the need for complex heat dissipation designs and adapting to various deployment forms such as embedded devices and battery-powered terminals.

Furthermore, the core board has undergone industrial-grade reliability optimization, and core components use industrial-grade specifications, making it suitable for wide-temperature operating environments. It possesses resistance to vibration and electromagnetic interference, enabling long-term stable operation in complex environments such as industrial workshops, outdoor parks, and automotive equipment, ensuring the continuity of AI inference services.

Comprehensive Software Ecosystem, Lowering the Barrier to Local AI Development

Addressing the development challenges brought about by fragmented scenarios, the RK3588 platform is equipped with a comprehensive software development toolchain and model adaptation library. It supports model conversion and deployment for mainstream AI frameworks such as TensorFlow, PyTorch, and Caffe, providing rich operator support and optimization tools to help developers quickly complete edge porting and performance optimization of AI models. The platform supports multiple mainstream operating systems, including Linux and Android, adapting to the application development needs of various industries and significantly lowering the development threshold for localized AI applications.

Full-Chain Ecosystem and IDH Services: Solving the Challenges of Fragmented Scenario Deployment

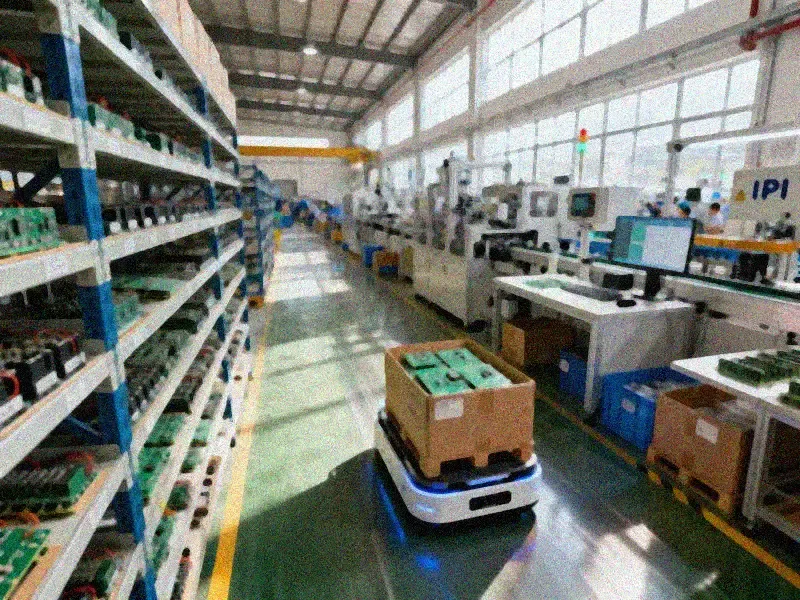

The widespread application of the RK3588 platform has brought about rich scenario adaptation needs, and also placed higher demands on customized service capabilities. We have built a complete technology ecosystem and a full-process IDH service system, providing customers with an integrated solution of "computing power platform + hardware manufacturing + technical support," comprehensively addressing industry deployment pain points.

At the technology platform level, we maintain deep technical cooperation with Rockchip, deeply integrating the original manufacturer's development resources and toolchain, and have completed underlying BSP optimization and model adaptation for localized AI inference scenarios in different industries. Simultaneously, our technology platform covers multiple computing power platforms such as ARM, FPGA, and GPU, and can provide customized core board hardware solutions and computing power configurations according to customers' scenario needs, computing power indicators, and deployment forms, meeting the full-scenario development needs from consumer-grade smart terminals to industrial-grade edge devices.

At the IDH service level, we possess highly customized hardware design, heterogeneous integration capabilities, and full-process technical support. Addressing the diverse needs of different clients, we offer a complete technical service chain, from core board schematic design, PCB layout, hardware adaptation, and low-level driver development, to lightweight AI model porting, inference performance optimization, and multi-scenario application adaptation. Addressing the industry pain point of high barriers to heterogeneous computing development, we provide mature heterogeneous computing power scheduling reference solutions and development support, helping clients fully leverage the collaborative computing power of CPU+GPU+NPU without investing significant low-level development resources, enabling rapid product development and deployment.

Full-Scenario Coverage: Practical Implementation of Localized AI Inference

The localized AI inference solution based on the RK3588 NPU embedded core board has been deployed on a large scale in multiple fields, including industrial manufacturing, smart parks, smart homes, healthcare, and intelligent inspection, covering the mainstream application scenarios of current edge AI inference.

In the industrial manufacturing field, the core board is widely used in production line visual quality inspection, predictive maintenance of equipment, and intelligent monitoring of the production process. Product images captured by industrial cameras on the production line are directly used for defect detection and classification locally. Core production data does not leave the factory area, ensuring the security of process data and achieving millisecond-level defect identification and downtime response, while significantly reducing the bandwidth costs caused by uploading massive amounts of video data.

In smart parks and government/enterprise scenarios, the core board supports the localized deployment of applications such as access control facial recognition, park behavior analysis, and emergency event monitoring. The entire process of facial feature extraction, identity verification, and violation detection is completed locally. Personnel information and monitoring footage do not need to be uploaded to the cloud, ensuring personal information security, mitigating the risk of system failure due to network interruptions, and meeting the compliance requirements of government and enterprise units.

In the smart home and consumer terminal field, the core board provides localized AI computing power support for smart appliances, home robots, and other devices. Functions such as facial recognition unlocking, voiceprint command recognition, and intelligent home environment sensing are all processed locally. User home image and voice data do not need to be uploaded to the brand's cloud, completely solving the privacy leakage pain point of smart homes, while achieving lower latency human-computer interaction response.

In the medical and health and intelligent inspection field, the core board supports local image analysis of portable medical devices and real-time environmental perception for outdoor inspection robots. Medical image data and inspection data are initially analyzed and screened locally on the device, ensuring the security of sensitive data and achieving stable operation in offline environments, adapting to deployment scenarios without a stable public network, such as outdoor and remote areas.

Localized AI Inference Deployment: Creating Comprehensive Core Value for Customers

The localized AI inference solution based on the RK3588 embedded core board delivers quantifiable, comprehensive value to customers across various industries, driving the secure, efficient, and low-cost large-scale deployment of AI applications.

Regarding security and compliance, end-to-end localized processing achieves a closed-loop data flow, fundamentally mitigating the risk of data breaches. This helps customers easily meet legal and regulatory requirements related to data security and personal information protection, eliminating compliance risks in AI deployment while protecting core production data and trade secrets.

Regarding cost optimization, edge-side local inference significantly reduces the cloud transmission of raw data, substantially lowering bandwidth costs, cloud computing power leasing costs, and storage costs. Simultaneously, the high-performance hardware of the RK3588 platform significantly reduces customers' initial hardware investment, achieving cost optimization throughout the entire lifecycle.

In terms of performance, local inference achieves millisecond-level end-to-end response, completely resolving latency issues caused by cloud reliance. This ensures real-time requirements in scenarios such as industrial control and emergency response. Simultaneously, the multi-tasking capabilities enabled by heterogeneous computing power collaboration support more complex AI application scenarios, enhancing the product's core competitiveness.

Regarding development efficiency, a comprehensive software ecosystem and full-process IDH services significantly lower the underlying development threshold for customers. This allows them to focus on upper-layer application functions and product differentiation design without investing heavily in hardware adaptation and model optimization. Product development cycles are significantly shortened, enabling rapid time-to-market and large-scale deployment.

End-to-End Service Guarantee, Facilitating Product Deployment from Design to Mass Production

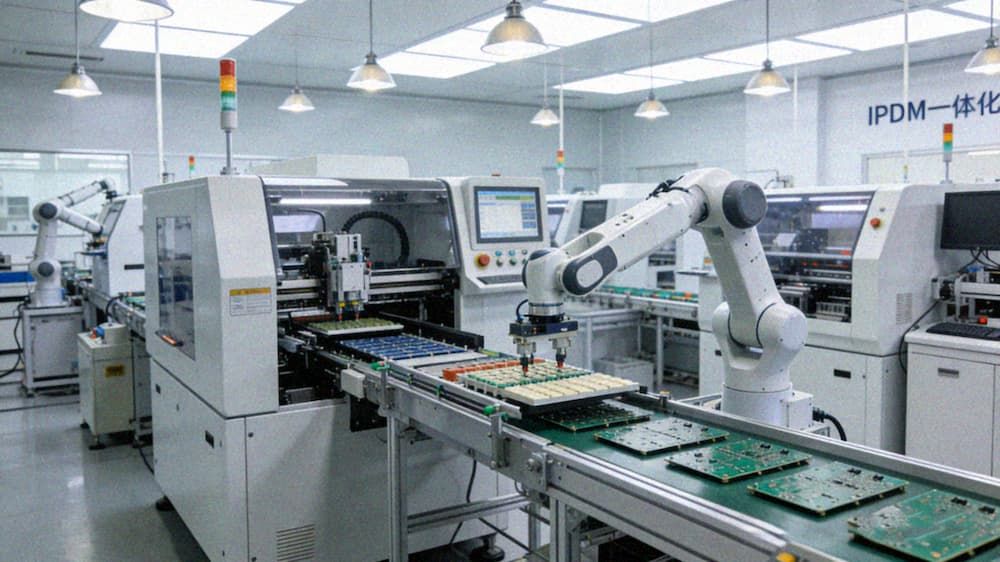

We provide customers with end-to-end hardware solutions from product design to mass production delivery, comprehensively ensuring the deployment and long-term stable operation of localized AI inference solutions.

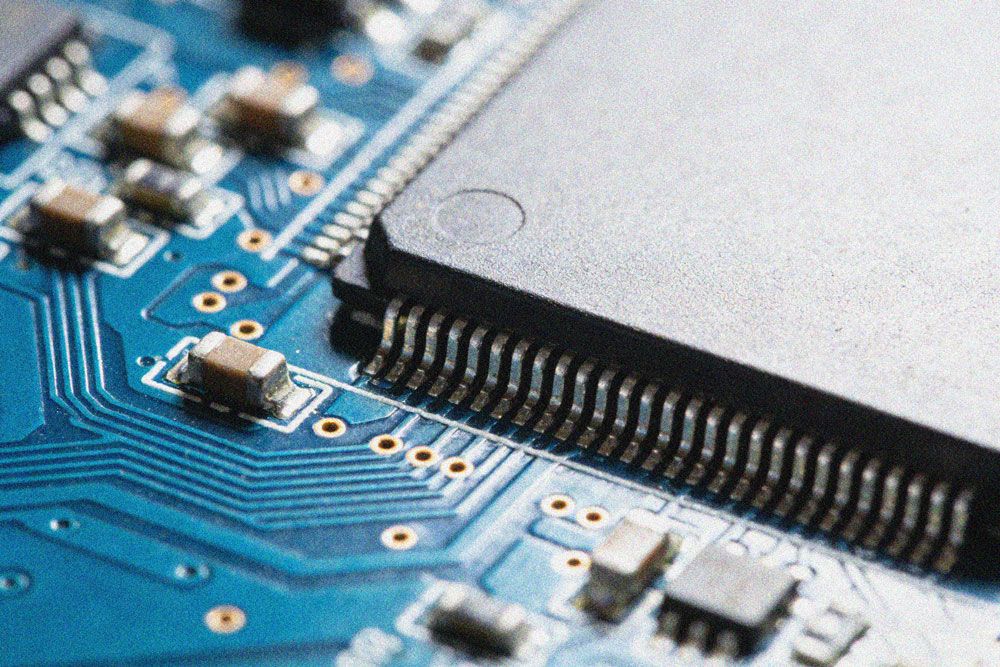

At the system-level hardware design level, we provide end-to-end technical support, including schematic design of core boards and baseboards, PCB layout optimization, high-speed signal integrity design, power architecture design, and collaborative design of thermal structures. We can complete targeted customized designs based on customers' deployment scenarios, hardware form factors, and functional requirements, ensuring the electrical performance and environmental adaptability of products.

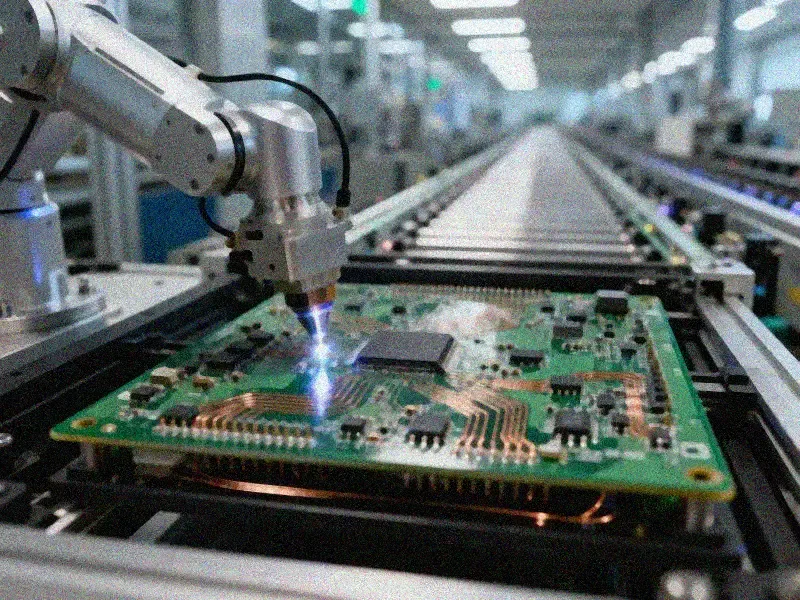

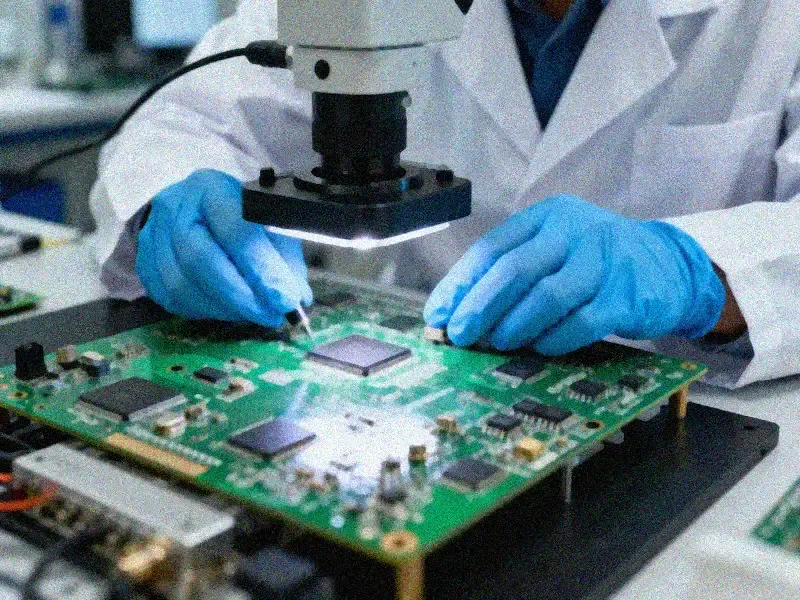

At the high-end PCB manufacturing and system integration level, we possess mature high-end manufacturing capabilities, enabling the processing of multi-layer HDI boards and high-speed, low-loss materials, coupled with core processes such as high-reliability immersion gold plating and precise impedance control. We also provide end-to-end EMS manufacturing services, including full-category BOM supply chain assurance, PCBA assembly, module assembly, system integration, aging testing, functional verification, and batch delivery, achieving end-to-end control from design to mass production, ensuring product delivery cycle and quality consistency.

As the integration of AI technology and the real economy continues to deepen, localized AI inference has become the core development paradigm of the edge intelligence era. With its mature edge AI computing power, comprehensive development ecosystem, and industrial-grade stability, the RK3588 NPU embedded core board is becoming a core computing power carrier for localized AI inference deployments across various industries. In the future, we will continue to optimize core board solutions and provide end-to-end customized services, working with industry partners to jointly promote the large-scale deployment of edge AI technology and provide secure, efficient, and reliable computing power support for the intelligent upgrade of thousands of industries.