This article focuses on the core industry changes at NVIDIA's 2026 GTC Global Developer Conference. Using a simplified technical perspective, it dissects NVIDIA's standard restructuring of the entire AI server hardware manufacturing chain. It also deeply integrates KINGBROTHER's IPDM one-stop integrated design and manufacturing solution, interpreting the entire chain logic from chip top-level architecture design to large-scale hardware mass production deployment. This allows ordinary readers to understand the complete path to realizing computing power from theoretical parameters to widespread industrial benefits behind the AI boom.

The NVIDIA GTC 2026 Global Developer Conference, held in March, remains a top-tier event in the global tech world. Jensen Huang, wearing his signature leather jacket, unveiled the next-generation Rubin architecture and upgraded large-model capabilities, dominating social media platforms. However, many people overlooked the most disruptive industry move at this conference: not the explosive performance increase of a single GPU, but NVIDIA's restructuring of the entire AI server hardware manufacturing chain. This directly determines the underlying computing power infrastructure for all the AI services we use in the coming years. As NVIDIA redefines the top-level technical standards for AI servers, Chinese electronics manufacturers, represented by KINGBROTHER, have become the core bridge connecting chip architecture innovation and large-scale mass production in this global computing revolution, thanks to their one-stop IPDM (Integrated Product Design and Manufacturing) solutions.

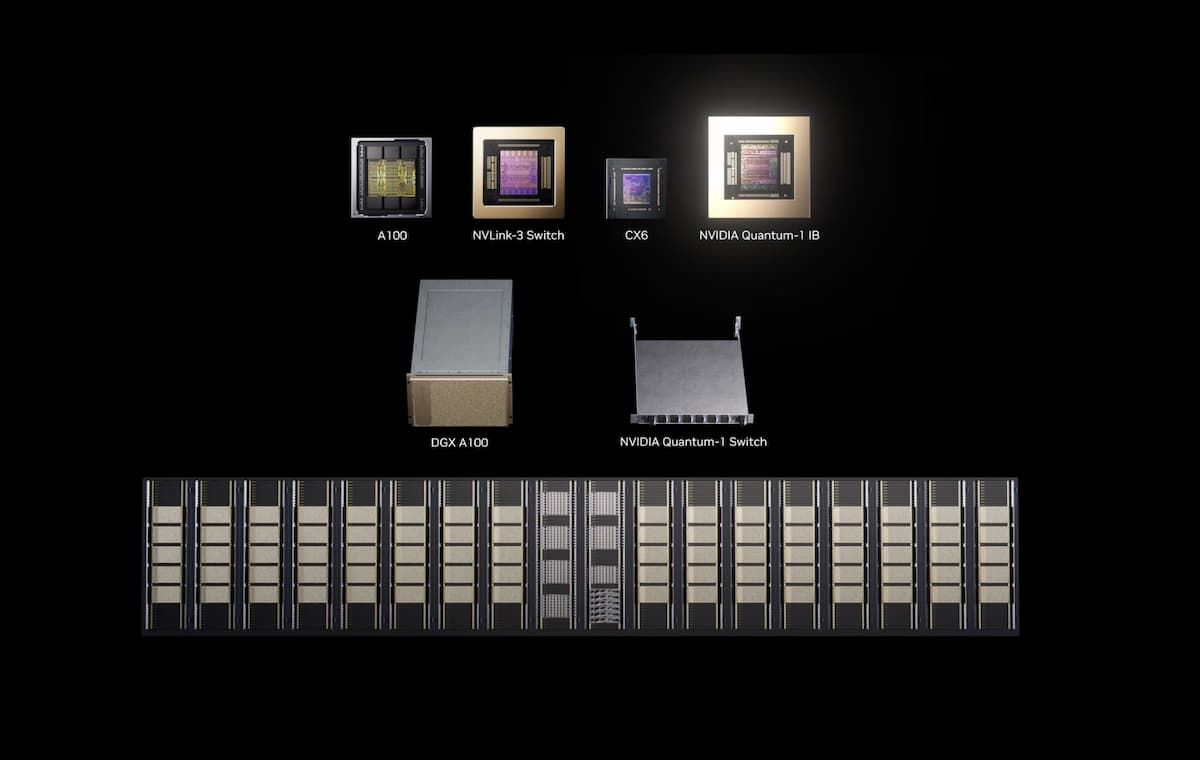

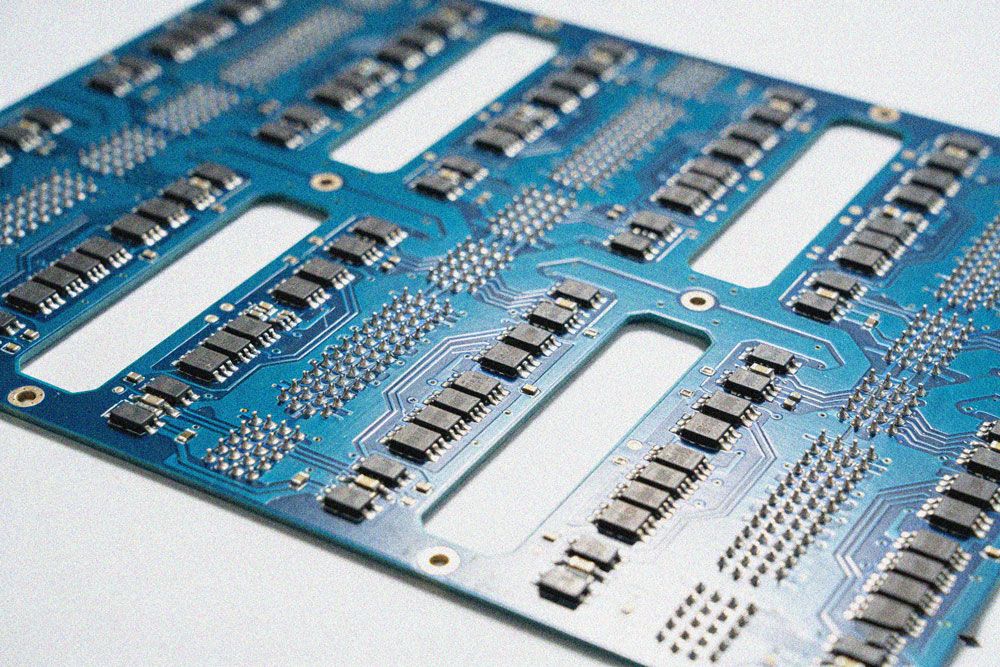

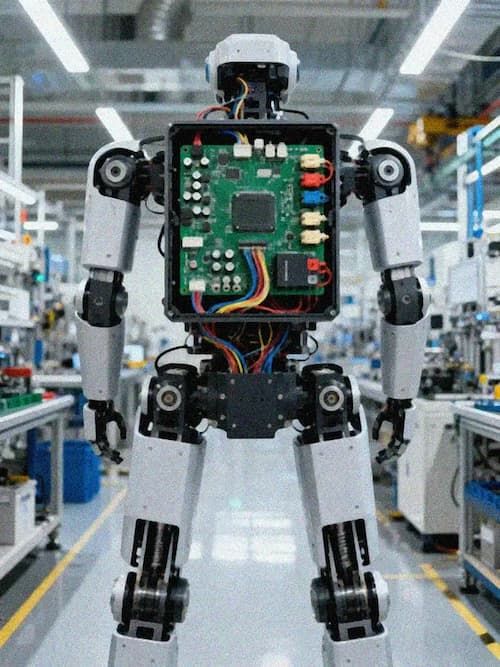

Many people's understanding of AI is limited to applications like ChatGPT and generative video, but the core of all AI capabilities is computing power. And the core carrier supporting large-scale AI computing power is the AI server. Unlike our home computers, which typically consist of a single CPU and graphics card for everyday office work and entertainment, a standard AI server is a "miniature computing power factory." It usually features 8-32 top-tier AI GPUs, coupled with massive amounts of high-speed memory, cross-chip interconnect systems, and sophisticated cooling and power supply modules. The total computing power of a single AI server can equal that of thousands of home computers. The stable operation of this "computing power factory" and the full realization of the GPUs' theoretical performance depend crucially on the hardware board system centered around high-speed PCBs—from the accelerator board and interconnect board to the power management board, the design and manufacturing precision of each board directly determines the final computing performance.

For the past decade or so, AI server manufacturing has followed a "piecemeal" model: NVIDIA sells GPU chips, motherboard manufacturers design compatible circuit boards, server manufacturers assemble the complete system, and cloud providers and large internet companies then customize and modify them according to their specific needs. The entire supply chain is not only complex with numerous links, high technical barriers, and long delivery cycles, but also suffers from three long-standing industry problems: First, a disconnect between design and manufacturing, with insufficient DFM manufacturability leading to low mass production yields; second, poor system integration compatibility, with difficulties in adapting hardware solutions from different manufacturers, often resulting in situations where "GPUs have strong theoretical performance, but the overall computing power of the machine is not fully utilized"; and third, difficulty in ensuring reliability, as the high power consumption and high load characteristics of AI servers place extreme demands on the environmental adaptability and long-term stability of the hardware, making it difficult to achieve end-to-end quality control in a decentralized manufacturing model. These pain points are precisely the core challenges faced by artificial intelligence products in manufacturing scenarios. At GTC 2026, NVIDIA completely rewrote this long-standing manufacturing logic.

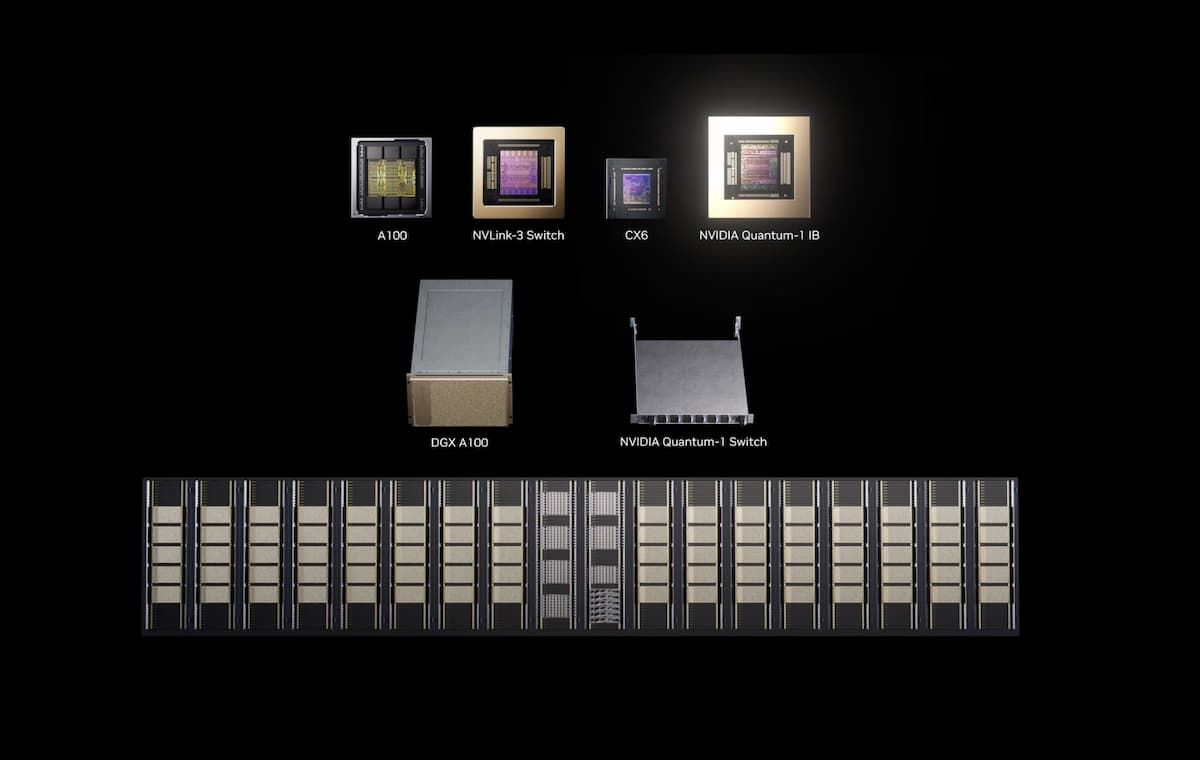

The first core change is the shift from "selling chips" to "setting manufacturing standards," making GPUs the "core pre-fabricated modules" of servers. The biggest upgrade of the new generation Rubin architecture GPU released at this conference is not only the doubling of single-chip computing power, but also the adoption of a fully modular chiplet manufacturing solution. In layman's terms, previous top-tier AI GPUs were massive, monolithic chips, like a 10-inch chiffon cake. Even a small air bubble during baking could render the entire chip unusable, resulting in prohibitively high manufacturing costs. The new Chiplet solution, however, breaks down the large chip into multiple standardized smaller chips, like disassembling a 10-inch cake into four 2.5-inch slices. This significantly improves production yield; if one chip fails, only the corresponding module needs to be replaced, and the chips can be flexibly combined according to computing power requirements. More importantly, NVIDIA has directly packaged the GPU, cache, and interconnect modules into a complete "computing module," setting fixed dimensions, interfaces, and power supply standards for server manufacturers. Manufacturers no longer need to invest heavily in designing GPU adaptation solutions; they can simply plug the module into a standard motherboard. This drastically lowers the technological barrier to complete system manufacturing.

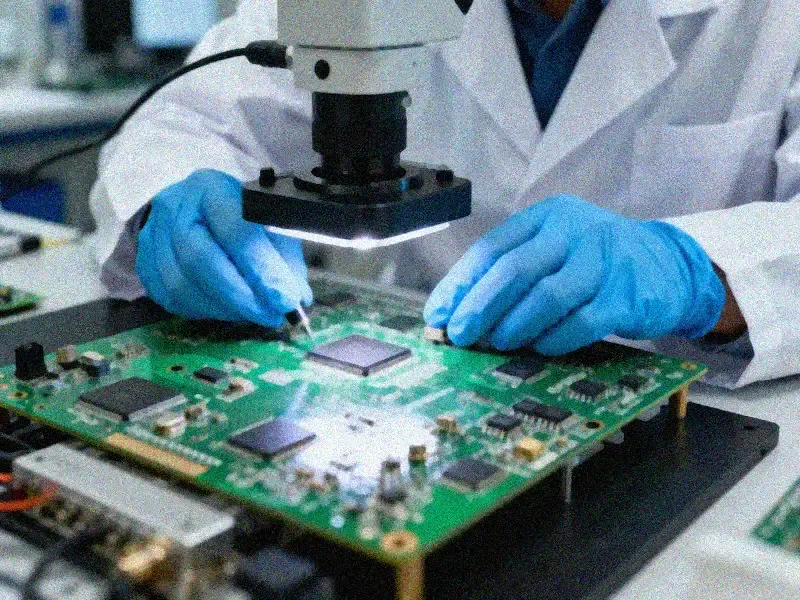

However, the successful mass production and deployment of this standardized computing module relies heavily on front-end integrated design capabilities. KINGBROTHER's IPDM system's IPD (Integrated Product Design) platform is the core tool bridging NVIDIA's standards and hardware implementation. Centered on ARM, FPGA, and GPU chip platforms, it provides a full-process service from schematic design and SI/PI signal and power integrity simulation to high-speed PCB design and EMC optimization. It is particularly capable of 56-layer 112Gbps high-speed PCB design, perfectly adapting to the hardware design requirements of the new generation of Rubin architecture GPUs. Simultaneously, relying on a database of over 3.27 million certified materials and the KBOM technology platform, KINGBROTHER can achieve intelligent matching of multi-source alternative materials, full lifecycle BOM risk warning, and output of domestic alternative solutions. This solves the supply chain stability problem of standardized computing modules and achieves controllable overall machine costs through material optimization, truly transforming NVIDIA's modular standards from paper specifications into rapidly deployable hardware products.

The second core transformation is the establishment of a "high-speed computing network" within the server, solving the industry bottleneck of data transmission. Many people don't know that the biggest performance bottleneck of AI servers has never been the computing power of the GPU itself, but rather the data transmission speed between multiple GPUs and between GPUs and memory. This is like having 10 top-notch workers in a factory, but raw materials can only be transported via narrow, winding paths; no matter how efficient the workers are, they're essentially idle. At this year's GTC, NVIDIA directly incorporated its next-generation NVLink 8.0 interconnect technology into the mandatory manufacturing standard for AI server motherboards. Single-link bandwidth is doubled compared to the previous generation, while simultaneously unifying the interconnect protocols between GPUs, CPUs, and memory. Previously, the transmission efficiency between GPUs in servers from different manufacturers varied drastically. Now, manufactured according to NVIDIA's standards, all systems can fully utilize the GPU's interconnect bandwidth, completely resolving the pain point of "wasted computing power."

The efficiency of this "computing power highway" ultimately depends on the foundation—the manufacturing level of the high-speed PCB and interconnect cards. The doubled bandwidth brought by NVLink 8.0 places extreme demands on the PCB's signal transmission integrity, electromagnetic interference resistance, and ultra-low latency transmission performance. Traditional manufacturing processes easily lead to problems such as signal attenuation, impedance mismatch, and excessive transmission latency, rendering NVIDIA's high-bandwidth standards meaningless. With over two decades of PCB technology accumulation, KINGBROTHER has developed a full-category manufacturing capability covering high-speed, multilayer boards, HDI boards, and rigid-flex boards. Through the selection of low-loss, high-frequency substrates, precise differential pair routing and impedance matching design, and strict signal integrity control, it perfectly adapts to the demands of 112Gbps ultra-high-speed signal transmission, fully unleashing the bandwidth potential of NVLink 8.0. Simultaneously, its interconnect communication board solution within the IPDM system achieves ultra-low latency communication, multi-protocol conversion, and optical circuit co-design, opening up a high-speed data path across the entire link between the GPU and CPU, memory, and storage. From a hardware manufacturing perspective, it truly realizes the comprehensive implementation of the interconnect standards set by NVIDIA.

The third core transformation is the standardization of manufacturing solutions for long-standing challenges such as heat dissipation and power supply. A fully configured top-tier AI server consumes peak power comparable to a dozen household air conditioners, and heat dissipation has always been a core challenge restricting the release of computing power. Previously, manufacturers explored heat dissipation solutions independently, switching from air cooling to liquid cooling, often resulting in problems such as leakage, uneven heat dissipation, and high overall failure rates. At this conference, NVIDIA directly launched its end-to-end liquid cooling manufacturing standard, integrating the liquid cooling module and GPU computing module into a single design. This standard undergoes full-scenario stress testing before leaving the factory, allowing server manufacturers to simply connect standard coolant piping for immediate use. This not only improves heat dissipation efficiency by 40% but also reduces the overall system's heat-related failure rate by over 80%.

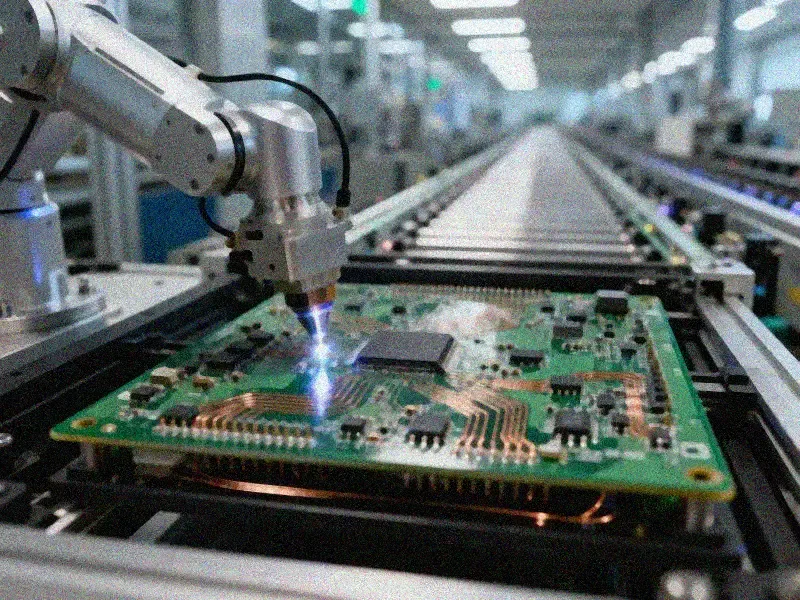

The implementation of this standardized liquid cooling and power supply solution also requires collaborative technology across the entire manufacturing process. KINGBROTHER's high-heat-dissipation printed circuit board solution, through core processes such as localized embedded ceramics, thick aluminum-based copper, and embedded copper blocks, combined with optimized heat dissipation holes and precise placement of heat-generating components, significantly improves the board's thermal conductivity, perfectly complementing NVIDIA's integrated liquid cooling solution. Simultaneously, its power management board solution, through dynamic power consumption optimization, multi-phase power supply stabilization, and high-voltage isolation protection technology, solves the power supply stability challenge under peak power consumption in top-tier AI servers. In the IPM (Integrated Product Manufacturing) process, KINGBROTHER utilizes high-density SMT (Surface Mount Technology), conformal coating, and comprehensive reliability testing to ensure the long-term stable operation of AI server hardware under high temperature, high humidity, and high load environments. Combined with the full-dimensional testing capabilities of its CNAS/CMA-certified laboratory, KINGBROTHER systematically improves product reliability across four dimensions: design, materials, processes, and usage, truly translating NVIDIA's thermal and power supply standards into the long-term stable operation of the entire system.

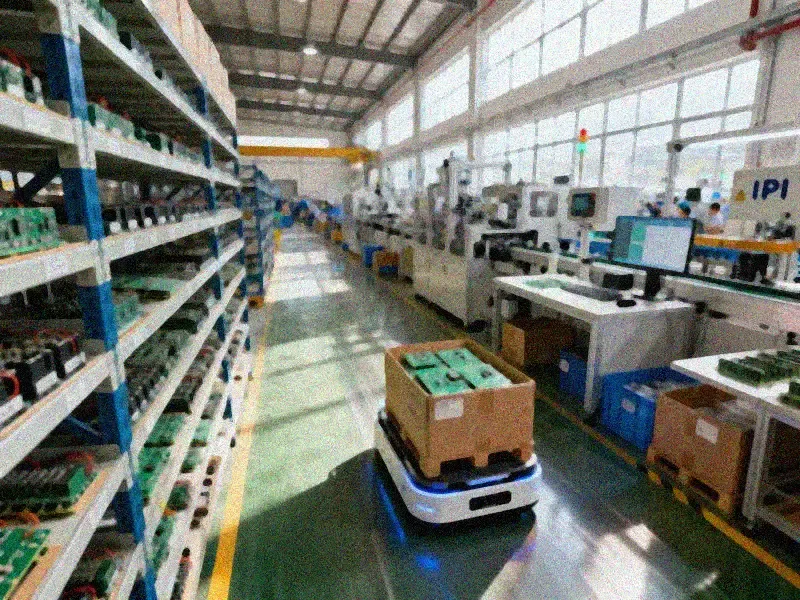

For the entire industry, this hardware manufacturing revolution at GTC 2026 essentially represents NVIDIA transforming AI servers from "customized luxury goods" into "standardized industrial products." KINGBROTHER's one-stop IPDM solution is the core lever for enabling rapid, low-cost, and highly reliable mass production of this standardized industrial product. This comprehensive system, covering integrated product design, PCB manufacturing, PCBA integration and assembly, and reliability verification, can shorten the AI hardware R&D cycle by 20%-30% and compress the mass production cycle to 60% of the industry average, helping customers double their product launch speed. Simultaneously, through DFM manufacturability optimization and domestic substitution solutions, it significantly reduces manufacturing costs. This means that top-tier AI computing power, previously only affordable to leading cloud vendors and internet giants, can now be accessed by small and medium-sized manufacturers and local intelligent computing centers. Through IPDM solutions, they can quickly and cost-effectively manufacture AI servers compliant with NVIDIA's next-generation standards and obtain top-tier computing power.

From a deeper industry perspective, NVIDIA's technology launch at GTC 2026 completed a top-level rule reconstruction for AI computing hardware. The one-stop design and manufacturing system represented by KINGBROTHER IPDM is the key support for this rule reconstruction to truly be implemented in the industry and benefit everyone. Currently, KINGBROTHER has served dozens of leading AI companies, including Cloudwalk Technology and LeiShen Intelligent, with this system. It has accumulated practical experience with over 20,000 customers in products such as computing boards, high-speed backplanes, and AI control systems, achieving a 35% higher success rate than the industry average. In the future, with the deep synergy between chip architecture innovation and manufacturing system upgrades, the barriers to AI computing power will continue to decrease. The AI services we can use will experience a comprehensive explosion in cost reduction, faster response times, and enhanced capabilities. This invisible hardware revolution will ultimately reshape the underlying foundation of the entire digital world.