Overview

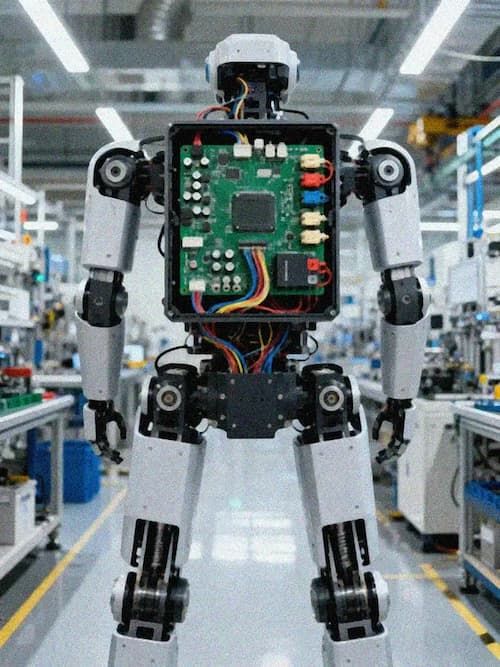

Edge AI Computing refers to the deployment of artificial intelligence inference and processing capabilities directly on edge-side devices close to data generation sources, rather than relying on remote cloud computing centers. This computing paradigm eliminates the latency caused by long-distance data transmission, cuts high bandwidth costs associated with cloud-based AI processing, and supports real-time, autonomous decision making for edge devices, making it a core enabling technology for modern autonomous machines and intelligent industrial systems. Traditional edge computing hardware often faces core challenges including insufficient AI processing power, limited high-speed data transmission capacity, and poor reliability in harsh field environments. Modern edge AI computing solutions leverage heterogeneous accelerated computing architecture to deliver exceptional performance that meets the demands of complex AI workload deployment requirements, with peak computing power ranging from 32 TOPS to 100 TOPS, and up to 750 Gbps high-speed I/O performance, supporting stable long-term operation even in complex deployment scenarios. These solutions address core industry pain points, enabling the large-scale deployment of AI capabilities across a wide range of vertical sectors.

Technical Capabilities

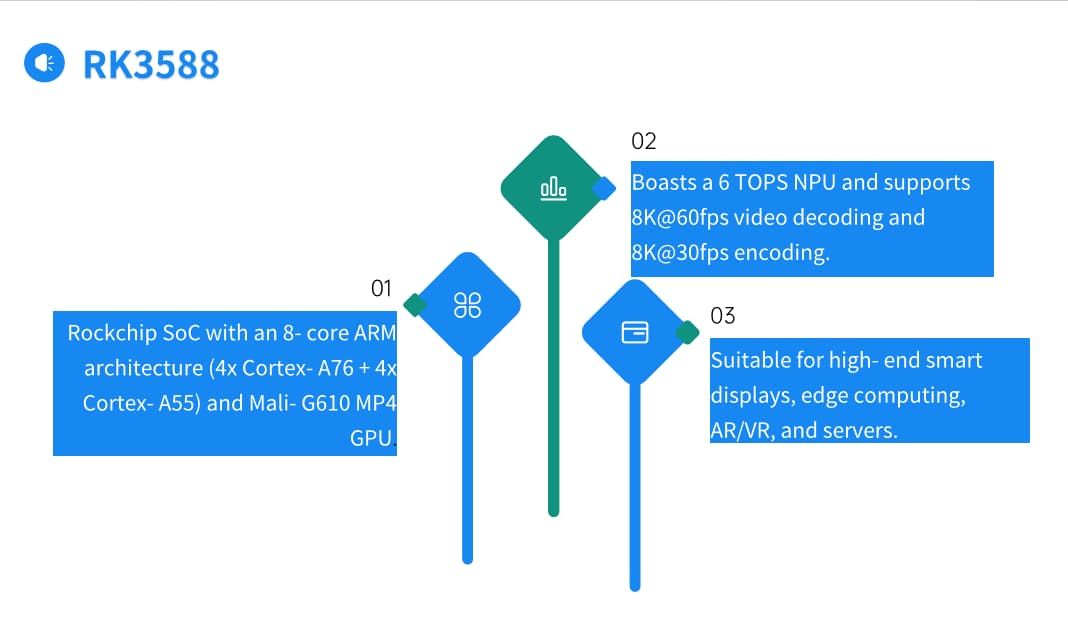

- Heterogeneous Accelerated Computing Architecture: Supports peak AI processing performance ranging from 32 TOPS to 100 TOPS, enabling parallel processing of complex deep learning models, computer vision workloads, and real-time sensor data analysis without relying on cloud connectivity. The architecture is optimized for edge-side resource constraints, balancing high computing performance and low power consumption, making it suitable for battery-powered and mobile edge device deployment.

- High-Speed I/O Support: Delivers up to 750 Gbps high-speed I/O performance, supporting high-bandwidth transmission of multi-sensor data including 4K/8K camera feeds, LiDAR point clouds, and radar data, ensuring zero data loss and low latency for real-time AI inference. The interface design is compatible with mainstream high-speed transmission protocols, supporting seamless data exchange between computing units, high-bandwidth memory, and peripheral devices.

- Rich Peripheral Interface Compatibility: Equipped with a full range of common IO interface types, supporting direct connection of mainstream industrial sensors, communication modules, and actuation devices, simplifying integration for end-use application development. The modular interface design supports flexible configuration based on scenario-specific requirements, reducing secondary development costs for system integrators.

- Ruggedized Design Adaptability: Supports lightweight, rugged aluminum alloy housing designs, with optimized thermal management and mounting structures for easy field installation, adapting to harsh deployment environments including outdoor, factory floor, and mobile equipment. The compact form factor is suitable for installation in space-constrained edge device scenarios such as robots, vehicles, and field monitoring terminals.

- High Reliability Operation: Optimized circuit and system design delivers exceptional mean time between failures (MTBF) performance, supporting 24/7 continuous stable operation for long periods, reducing downtime and maintenance costs for field-deployed AI systems. The design also supports fault self-detection and self-recovery mechanisms, ensuring uninterrupted operation even in non-ideal power supply and network fluctuation scenarios.

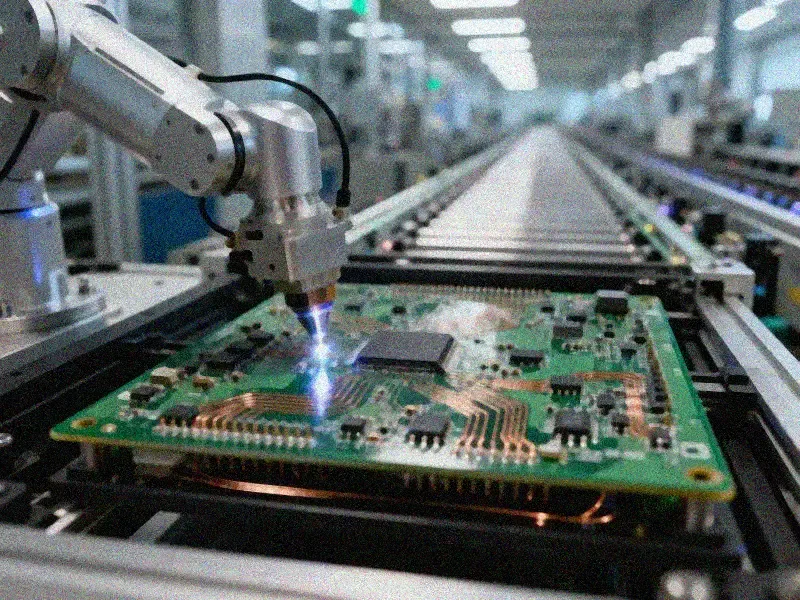

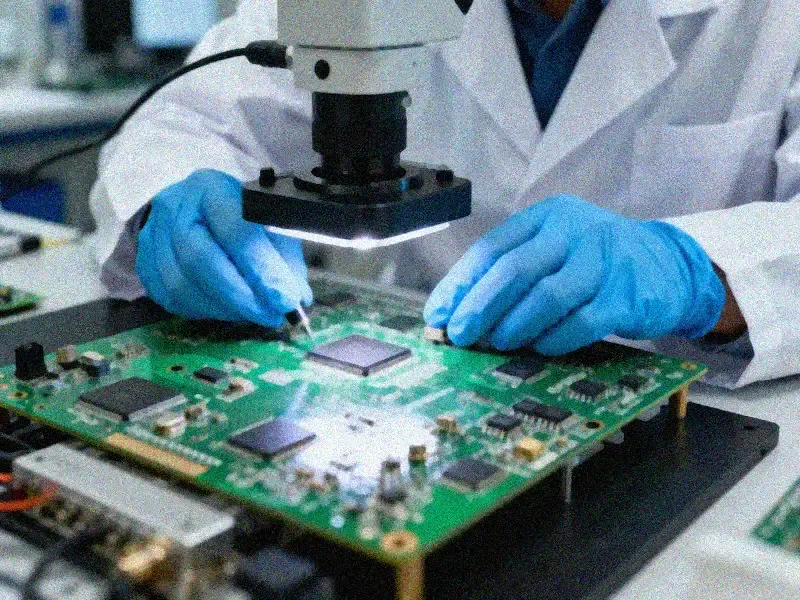

Quality Standards

- Signal Integrity Validation: All edge AI computing hardware designs undergo full signal integrity testing, including impedance control verification, crosstalk testing, and high-speed transmission performance validation, ensuring stable data transmission between computing cores, memory, and peripheral interfaces. Impedance control accuracy meets 100Ω/90Ω precision requirements, reducing signal reflection and transmission loss.

- Environmental Reliability Testing: Products pass comprehensive high/low temperature operation testing, vibration and shock testing, dust and water ingress resistance testing, meeting industrial-grade environmental adaptability requirements for operation in temperatures ranging from -40°C to +85°C. The testing process simulates real-world deployment conditions, verifying product stability across different application scenarios.

- EMC Compliance: Designs meet global EMC standards for industrial and automotive applications, ensuring stable operation even in high-electromagnetic-interference environments such as factory floors, power facilities, and transportation hubs. The design includes targeted electromagnetic shielding measures, reducing electromagnetic radiation interference to and from other connected devices.

- Performance Benchmark Testing: Each hardware unit undergoes full load performance testing under real AI workload conditions, verifying that peak computing performance, inference latency, and power consumption metrics meet design specifications before delivery. The testing process covers common AI workload types including image recognition, natural language processing, and sensor data analysis, ensuring compatibility with mainstream AI model operation requirements.

Applications

Edge AI computing solutions are applicable across a wide range of industry scenarios, including but not limited to:

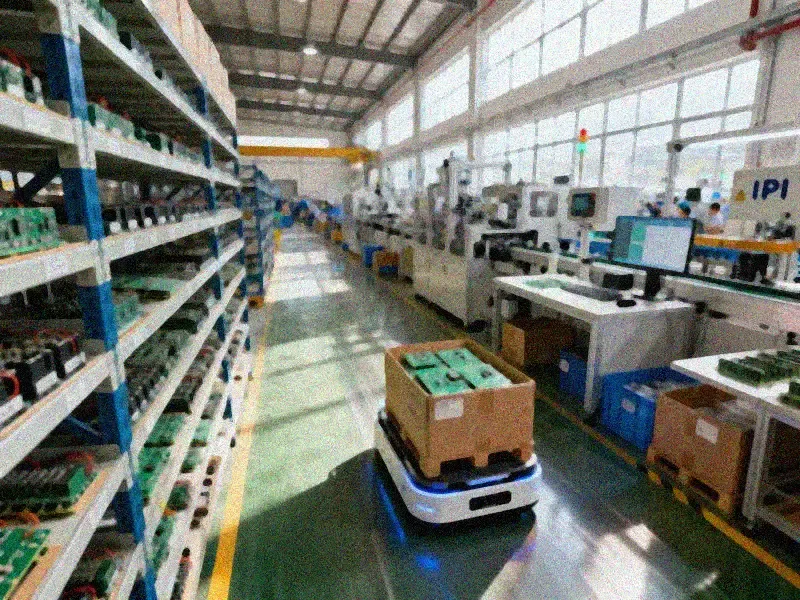

- Manufacturing: Autonomous material handling robots, intelligent production line quality inspection systems, predictive maintenance edge terminals for industrial equipment, workshop environment intelligent monitoring systems.

- Logistics: Autonomous delivery vehicles, warehouse intelligent sorting systems, logistics park intelligent monitoring terminals, cold chain transportation temperature and humidity intelligent control systems.

- Smart Cities: Intelligent road inspection systems, smart community security monitoring terminals, low altitude defense systems, smart building energy management systems, urban traffic flow intelligent control systems.

- Agriculture: Smart farming monitoring and control terminals, agricultural intelligent inspection robots, livestock health intelligent monitoring systems, farmland soil and water quality monitoring systems.

- Healthcare: AI-assisted diagnostic edge terminals, medical intelligent inspection robots, hospital intelligent service robots, medical cold chain monitoring systems.

- Retail and Services: Intelligent customer service robots, in-store consumer behavior analysis terminals, smart catering food safety inspection systems, public space intelligent service terminals.

- Transportation: Autonomous driving perception and decision units, intelligent traffic monitoring and management terminals, public transport intelligent operation management systems, parking lot intelligent management systems.

Key Advantages

- Balanced Performance and Cost: Optimized hardware design balances high performance AI computing capabilities with cost efficiency, making high-performance edge AI deployment accessible for projects of all scales, from small pilot projects to large-scale mass deployment. The design eliminates redundant functional modules that are not required for edge scenarios, reducing unnecessary hardware costs without compromising core performance.

- Flexible Deployment Support: Modular design supports customization of computing power, interface configurations, and housing designs to meet the unique requirements of different industry use cases. The solution supports both off-the-shelf standard products for rapid deployment and fully customized designs for scenario-specific requirements, reducing time-to-market for new AI edge products.

- Low Latency Inference: Edge-side processing of AI workloads reduces end-to-end latency to milliseconds, supporting real-time decision making requirements for time-sensitive applications such as autonomous driving and industrial control. The low latency performance ensures timely response to dynamic changes in the operating environment, improving the safety and reliability of autonomous systems.

- Reduced Operational Costs: Local processing of data eliminates the need for large volumes of raw data transmission to the cloud, cutting bandwidth costs and reducing cloud computing resource consumption. The edge-side processing also reduces reliance on stable network connectivity requirements, making deployment possible in remote areas with limited network coverage.

- Data Security Enhancement: Sensitive raw data is processed locally on the edge side, avoiding data leakage risks during transmission and storage in cloud platforms, meeting strict data security and privacy compliance requirements for industries such as healthcare, finance, and government. The local data processing also supports compliance with data localization regulations in different regions.

Contact Information

If you have any requirements related to edge AI computing hardware design, manufacturing, or customization, please get in touch with our technical team. We will provide you with professional technical consulting, customized solution development, and full lifecycle support services tailored to your specific use case needs. Our team can help you evaluate performance requirements, optimize design solutions, and support you through the entire process from prototype verification to mass production deployment.